Fulldome Camera For Unity

Suite of tools for building games for Fulldome.

Here there are three approaches that can be used to simulate a fisheye lens on Unity.

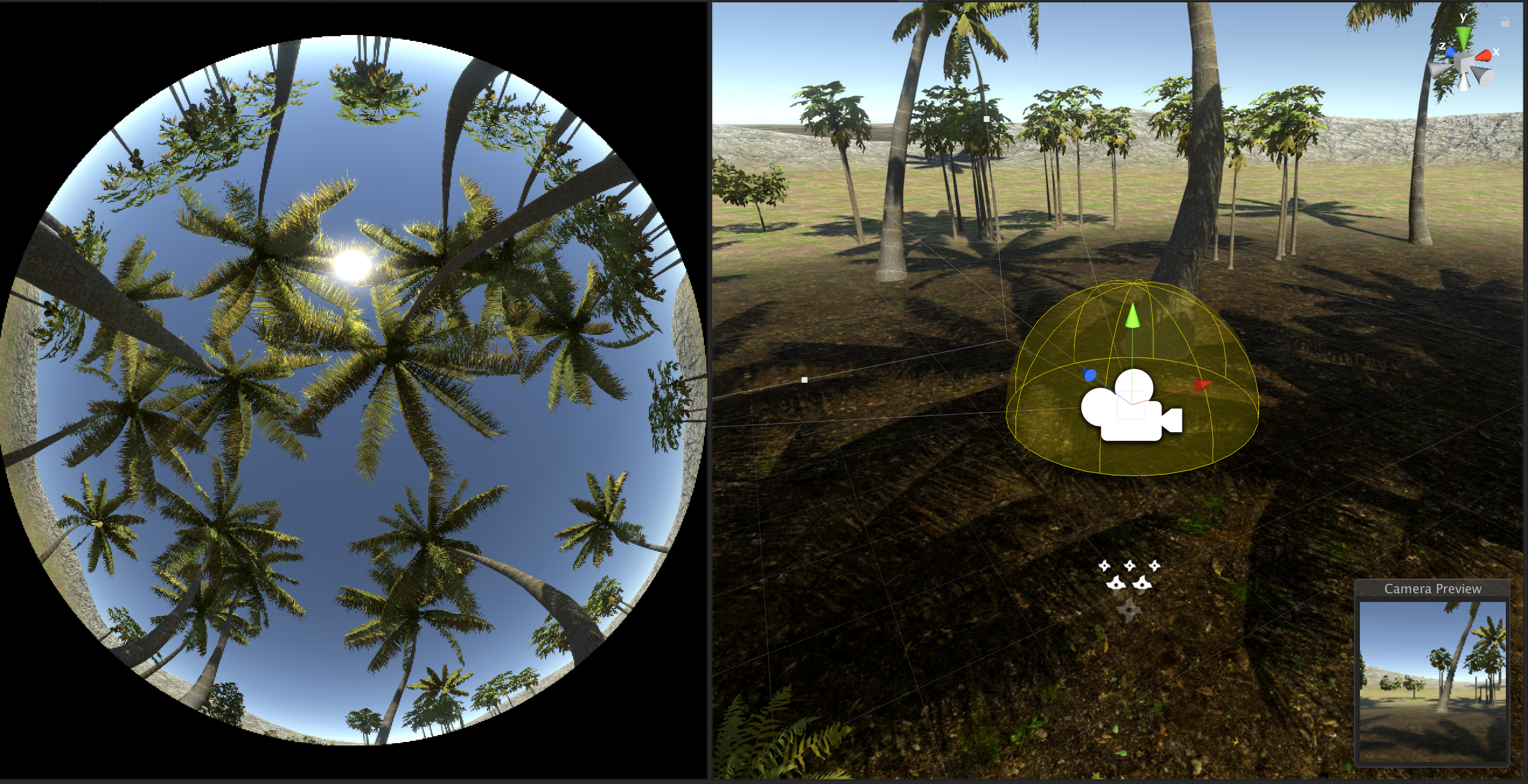

- Fulldome Camera: Renders the active camera as a 360 panorama (internally a Cubemap) and extracts a Domemaster from it.

- VFX Graph (experimental): No camera tricks, use custom VFX blocks to distort particles around the camera, as if it was a Fisheye lens

- Shader Graph (experimental): No camera tricks, use custom Shader Graph nodes to distort mesh vertices around the camera, as if it was a Fisheye lens

Get inspired and share your works at the Unity 3D Fulldome Development and Fulldome Artists United groups.

There’s plenty of information and considerations about the Fulldome format on my Blendy 360 Cam’s manual.

Downloads

-

HDRP, compatible starting from Unity 2019.2

- Download and import the latest Release package.

- Or… Clone or download this repository and open as a full project in Unity.

- Or… For specific Unity versions, checkout by tag: 2019.2

-

Legacy Standard Renderer, (not HDRP/URP). Download from the Releases page or checkout by tag: 2019.1 / 2018.3 / 2018.2 / 2018.1

-

For Unity 5.6, get FulldomeCameraForUnity5

1) Fulldome Camera (HDRP)

How it works

This approach was inspired by this article, and relies on the Camera.RenderToCubemap method first available in Unity 2018.1. It will render your game’s camera as a cubemap and distort it to a Domemaster format.

If we consider performance and quality, this solution is far from ideal. To make a cubemap, we need to render the scene (up to) 6 times, with 6 different cameras, one for each face of the cube. Rendering a good looking game once is already a challenge, everybody knows, just imagine six. Another problem is that some effects and shaders that depend on the camera position, like reflections, will look weird where the cube faces meet, because neigboring pixels were calculated for different cameras. Front-facing sprites commonly used on particles also will suffer from the same problem.

Ideally, Unity should provide us with a custom camera, that instead of using the usual frustum to raster each frame, would use our custom method that calculate rays from the camera to world, for each pixel. Like Cinema 4D plugins can do. But there’s no way to do it in Unity 😦

There’s an issue in Unity Feedback that suggests to solve that problem. The request description don’t sound like it, but the solution to the problem is the same (see my comment). Please give some votes.

And here’s the Unity forum thread.

Usage

This plugin needs just one script to run.

Create one GameObject and add the FulldomeForUnity/FulldomeCamera/Scripts/FulldomeCamera.cs to it. Drop the scene’s Main Camera to Main Camera. The Main Camera Component can now be disabled, since it will be replaced by the final texture.

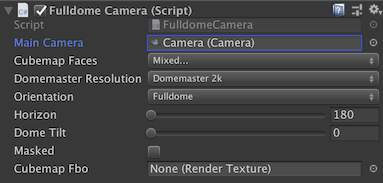

Configure your fulldome camera on this GameObject…

-

Main Camera: The camera used to render the cubemap. If null,

Camera.mainwill be used. -

Cubemap Faces: Depending on your camera orientation and Horizon setting, you can turn off some cubemap faces and save several passes. Flat cameras placed on the ground can turn off the NegativeY, for example.

-

Orientation: The point of interest, or sweet spot, on a Fulldome is close to the horizon on the bottom of the frame. On a Fisheye, it’s on the center of the frame. This setting will consider this and rotate the main camera to target the correct sweet spot.

-

DomeMaster Resolution: Resolution of the generated Domemaster frame, always square.

-

Horizon: Usually 180 degrees (half sphere).

-

Dome Tilt: Most planetariums are tilted, giving a more comfortable experience to viewers. Enter the venue tilt here.

-

Masked: Will ignore and paint black the area outside the fisheye circle. That’s 27% less pixels, so please mask.

-

Cubemap Fro: Optional Cubemap Render Texture for the base Cubemap render that will be used to extract the Domemaster. If left empty, the script will automatically create a render texture with the same dimensions as the Domemaster.

Examples

-

FulldomeCameraHDRP: Performance is quite bad right now, let’s try to find why and help fix it?

-

FulldomeCameraStandard: Same as previous but using Standard assets. Please not that the SRP Asset must be excluded (Project Settings, Graphics, Scriptable Render Pipeline Settings).

-

FulldomeCameraLegacy: Uses the original implementation of this approach, using scripts from the

Legacyfolder. It worked exactly the same, but using the Camera component callbacksOnPreRenderandOnPostRenderas entry points to generate the Domemaster frame, and was a little bit more complicated to to setup. But was not compatible with Scriptable Render Pipeline. For more details, check the 2019.1 README.

Performance

Since we’re rendering 5 to 6 cameras each frame, some care need to be taken about performance. Some post effects will behave weirdly, like reflections and bloom, and since they also affect performance, it’s better to disable completely.

Looking at the Frame Debugger, we can pinpoint some choke points, research which effects use them and disable, at the Cameras’s Frame Setting Override (my preference) or Project Settings > Quality > HDRP Settings. Removing these render settings helped a lot to improve performance:

- Disable Refraction

- Disable Distortion

- Disable SSR

- Disable Post > Depth Of Field

- Disable Post > Bloom

Also…

- Disable Lightning & Realtime GI

- Avoid Visual Environment / Sky / Fog

2) VFX Graph

Work in Progress, some tests are available at FulldomeForUnity/Xperiments/VFXGraph.

No camera tricks! Please do not use together with the FulldomeCamera.cs script.

Use a set of custom VFX blocks to distort particles around the camera, as if it was a Fisheye lens.

3) Shader Graph

Work in Progress, some tests available at FulldomeForUnity/Xperiments/ShaderGraph.

No camera tricks! Please do not use together with the FulldomeCamera.cs script.

Adapt your Shader Graph materials using a custom node to distort mesh vertices around the camera, as if it was a Fisheye lens.

The main problem with this approach right now is that the SRP cameras do not have traditional Camera matrices to do the proper transformations. I’m still not sure if it’s a bug or it’s just how it works.

Capture

You can render scenes from Unity for your Fulldome movie, of course!

Download Unity Recorder from the Packages Manager, and export the Game View.

…And From Unity To The Dome

Fulldome without a Dome is no fun at all!

Here’s how I do it.

Game is played on a Mac (Mac Pro, MBP Touchbar or even MBP Retina), using a Syphon plugin to stream the RenderTexture to Blendy Dome VJ, who takes care of mapping the dome and sending signal to 4 projectors, using a Datapath FX-4 card.

For a single projector with fisheye lenses, you can just fullscreen and mirror the screen to the projector. Or even better, run windowed, sending the Fulldome image to another application that can output it to the projector (see below), and you have your monitor free while playing the game.

There are many texture sharing frameworks that can be used to stream texture over applications, using a client-server model.

Syphon (macOS only)

Syphon is the first texture sharing framework to become popular among VJs, supported by all VJ apps.

To create a Syphon server, use KlakSyphon. Just add a SyphonServer component to your FulldomeCamera instance.

Works with Metal API only. Check here, Enabling Metal.

Spout (Windows only)

Spout is the equivalent of Syphon on Windows, supported by all VJ apps.

To create a Spout server, use KlakSpout. Just add a SpoutSender component to your FulldomeCamera instance.

NewTek NDI (Windows & macOS)

NDI has the advantage of send textures over a network. It’s adoption is growing very fast, and is being used in many TV studios as a clean and cheap capture solution.

To create a NDI server, use KlakNDI. Just add a SpoutSender component to your FulldomeCamera instance.

KlakNDI does not work on Metal yet, but a Syphon server can be converted to NDI using NDISyphon, a very nice little tool by Vidvox.

With this, the game can be played in one computer (Windows or macOS) with a NDI server, and another Mac on the network receive with NDISyphon, projecting with Blendy Dome VJ.

BlackSyphon (macOS only)

BlackSyphon, also by Vidvox, creates a Syphon server from a Black Magic capture card.

So the game can be played in fullscreen (Windows or macOS), sending a mirror image to a Black Magic capture on a Mac, reading it with BlackSyphon, projecting with Blendy Dome VJ.

Credits

Developed by Roger Sodré of Studio Avante and United VJs.

Many thanks to all these people whose work allowed us to get to this point…

Paul Bourke for his amazing dome research

Anton Marini and Tom Butterworth for revolutionizing visuals with Syphon.

David Lublin of Vidvox for all the freebie tools and for the amazing VDMX.